RobustMQ NATS Queue Group Subscription Design

What Is Queue Group Subscription

Regular subscriptions are 1:N — one published message is received by all subscribers. Queue group subscriptions are 1:1 — one published message is received by exactly one member of the queue group.

Regular subscription:

PUB tasks.new → Worker A receives

Worker B receives

Worker C receives

Queue group subscription (same queue group):

PUB tasks.new → Worker A receives (randomly selected)

Worker B does not receive

Worker C does not receiveQueue groups implement competing consumers at the broker layer — no client-side coordination required, no distributed locks. Scaling out is as simple as adding another subscriber with the same queue group name; scaling in is just disconnecting.

Core Design Principle: Decoupling Write from Delivery

The most significant difference between RobustMQ's queue group design and native NATS is that the write path and the delivery path are completely decoupled.

Native NATS uses a real-time push model: routing decisions are made and messages are delivered immediately upon arrival at a node. RobustMQ uses a storage-driven pull-then-push model:

- The write node only writes to storage — it makes no routing decisions whatsoever

- Delivery is driven independently by the queue group's primary node, which reads from storage and then pushes

This design radically simplifies the write path and naturally solves the message loss problem — if a push fails, the message is still in storage, so no additional recovery mechanism is needed.

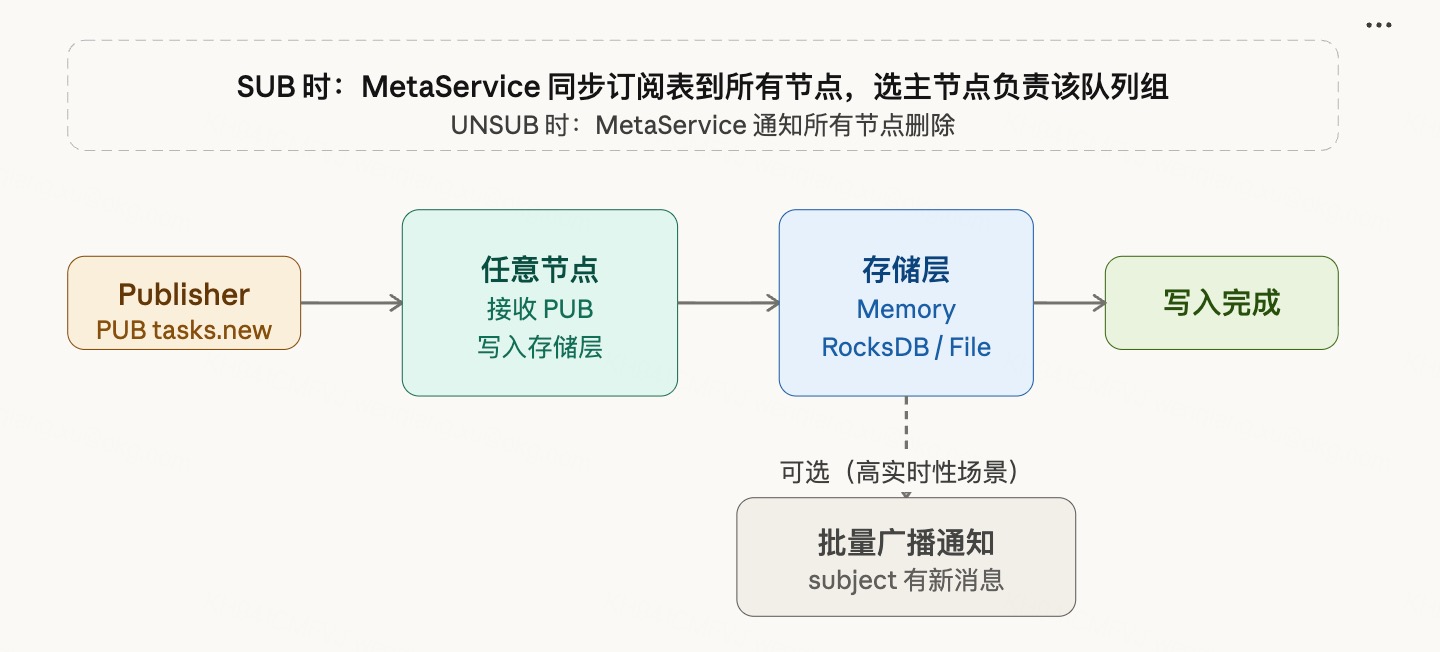

Subscription Sync

When a client issues a SUB, the subscription is synced to all nodes via MetaService, and a primary node is elected for that queue group.

Client SUB tasks.new workers

↓

MetaService syncs subscription table to all nodes

Primary election: one node is designated to handle delivery for this queue group

↓

On client UNSUB

MetaService notifies all nodes to remove that subscription entryWhy elect a primary at SUB time rather than making a dynamic routing decision per message?

Electing at write time would require a global routing decision for every arriving message — querying the routing table, selecting a member, deciding whether to deliver locally or remotely. Electing at SUB time moves that decision to the subscription establishment phase: it happens once, and the write path needs no routing logic at all — just a lookup for "which node is the primary for this queue group," then a direct forward.

RobustMQ already has primary election and failover built in. This design reuses that existing capability rather than introducing new complexity.

Write Path

Publisher PUB tasks.new

↓

Message arrives at any node

↓

Written to storage layer (Memory / RocksDB / File Segment)

↓

Write acknowledged

Optional (for low-latency scenarios):

Batch broadcast notification → new message available on subjectThe write node has no knowledge of subscriber locations and makes no delivery decisions. Write completes, performance is optimal.

The broadcast notification is an optional acceleration mechanism. It carries only the subject name — not the message payload — and is sent in batches to control overhead. When broadcast notification is disabled, delivery is driven entirely by the primary node's periodic pull loop, which is sufficient for most use cases.

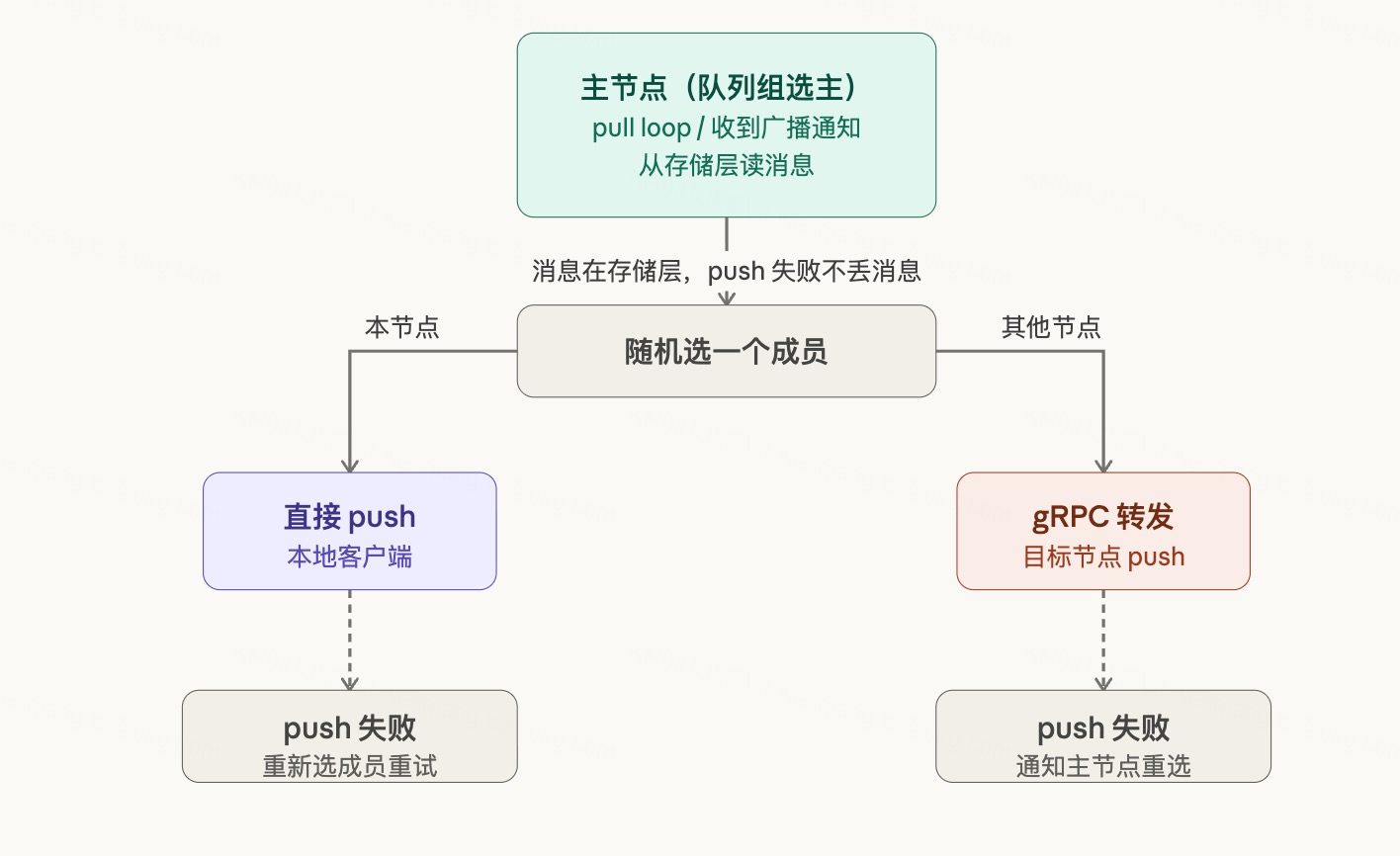

Delivery Path

Delivery is driven independently by the queue group's primary node.

Trigger mechanisms (two, complementary):

- Periodic pull loop (primary path): The primary node continuously polls storage. If there are messages, they are processed immediately; if not, the node waits 20–50ms before polling again. Simple to implement, stable and predictable performance.

- Broadcast notification wake-up (fast path): The write node broadcasts a "new message" signal; the primary node wakes up early without waiting for the next poll interval. Best suited for low-frequency write scenarios requiring low latency.

Together, the two mechanisms cover the full frequency spectrum: low-frequency writes benefit from timely notifications, while high-frequency writes are well-served by the pull loop running continuously — notifications add little marginal value there.

Delivery mechanism (two options, selected based on member location):

Primary node reads message

↓

Randomly selects one queue group member

↓

Member is local → push directly to client

Member is remote → forward via gRPC to target node → target node pushes to clientPush failure handling:

- Local push failure: re-select a member and retry

- Remote push failure (after gRPC forward): target node notifies the primary, primary re-selects a member

The message always remains in storage, so a push failure never causes message loss — just re-select and retry.

Queue Group Lifecycle

Queue groups require no explicit creation or destruction. The group comes into existence automatically when the first SUB with that queue group name arrives, at which point MetaService completes primary election. The group dissolves automatically when the last member UNSUBs, at which point the primary node stops its pull loop.

Members joining and leaving dynamically is completely transparent to message delivery — new members are immediately eligible for selection, and when a member leaves, the primary automatically routes to the remaining members.

Handling Disconnections

When a client connection is lost (either intentional disconnect or heartbeat timeout):

Server detects connection loss

↓

Notifies all nodes via MetaService to remove that subscription

↓

If the disconnected client belonged to the queue group's primary node

→ Re-elect a primary from the remaining members

If the disconnected client was a regular member

→ The primary automatically skips it on the next member selectionBecause messages are persisted in the storage layer, messages arriving during the disconnect window are never lost. Delivery resumes normally via the pull loop once a member is available.

Design Summary

| Dimension | Design Choice | Rationale |

|---|---|---|

| Primary election timing | At SUB time | Zero routing overhead on the write path |

| Write path | Write to storage only, no routing | Maximum simplicity and performance |

| Delivery trigger | Pull loop + broadcast notification | Covers full frequency spectrum |

| Cross-node delivery | gRPC forwarding | Reuses existing internal communication |

| Failure handling | Re-select member and retry | Message remains in storage, no loss |

| Primary election / failover | Reuse existing RobustMQ capability | No new complexity introduced |

Core idea: transform NATS's real-time push model into a storage-driven pull-then-push model. Write and delivery are fully decoupled. The write path is kept minimal. The primary node independently owns the delivery path. Failures have a guaranteed fallback.