Meta Service System Architecture

Meta Service is RobustMQ's built-in metadata storage component. Its role is analogous to ZooKeeper for Kafka and NameServer for RocketMQ. This component is responsible for RobustMQ cluster coordination, node discovery, metadata storage, and storage of part of the KV-style business data.

From a functional perspective, Meta Service covers:

- Cluster coordination: Cluster formation, node discovery and health checks, inter-node data distribution, and related coordination

- Metadata storage: Persistence of cluster-related and message-queue-protocol-related metadata, such as Broker info, Topic info, connector info, etc.

- KV-style business data storage: During message queue operation, some runtime data needs to be persisted, such as MQTT Session data, retained messages, will messages, etc. Since the scale of this data is predictable, Meta Service also stores it

- Controller: Cluster-level scheduling, such as node failover, Connector task scheduling, etc.

Thus, Meta Service bears many responsibilities in RobustMQ. By contrast, ZooKeeper for Kafka only handles metadata storage and cluster coordination. The reason for this design is that Meta Service, as a built-in custom component, aims to realize a fully separated compute, storage, and coordination architecture.

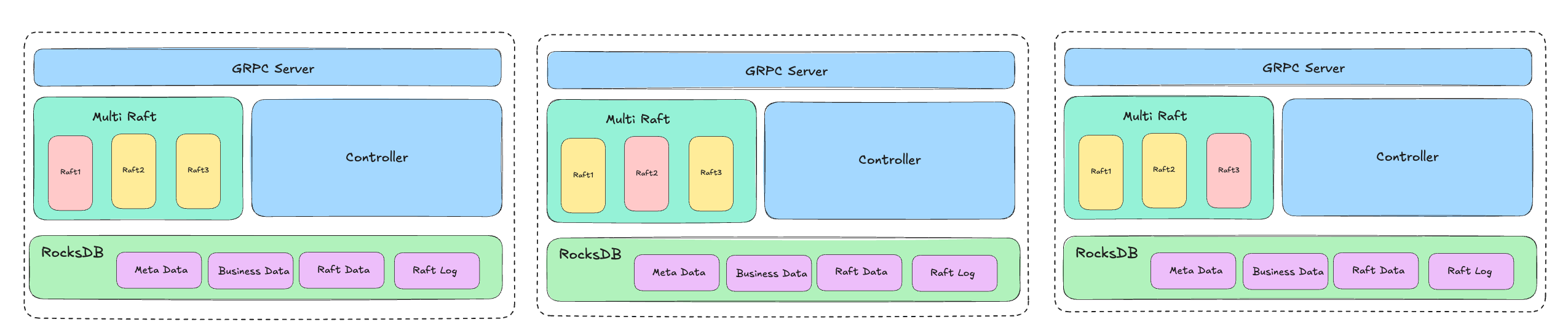

System Architecture

As shown above, from a technical standpoint Meta Service's core is: gRPC + Multi Raft + RocksDB.

Meta Nodes communicate via gRPC and also expose gRPC externally. Data consistency among nodes is guaranteed by the Raft protocol. To improve Meta Service performance, the system uses a Multi Raft architecture — multiple Raft state machines run within the Meta Service cluster, and the appropriate one is used based on need. Currently three state machines are supported: Metadata RaftMachine, Offset RaftMachine, and Data RaftMachine.

Meta Service data is persisted via RocksDB. Specifically, when data is written, it is distributed to multiple nodes through the Raft Machine. Nodes receive the data and write it to RocksDB. Raft state machine data and logs are also persisted in RocksDB.

When Meta Service starts, the system completes cluster formation according to the Raft protocol and elects a Leader through voting. At the same time, the controller thread starts on the Leader of the Metadata RaftMachine to perform cluster scheduling and management (e.g., MQTT Connector scheduling and management).

On Performance

Meta Service's core requirement is performance. Some metadata coordination services in the industry — ZooKeeper, etcd, etc. — are limited by performance and memory usage, leading to issues. ZooKeeper is mainly limited by its single-Leader architecture; in addition, storing all data in memory limits data capacity. Similarly, etcd has a single-Raft bottleneck; while etcd has larger capacity than ZooKeeper, it still has limits.

Architecturally, Meta Service will long-term bear multiple functions including data storage. In essence it is a combination of metadata coordination service and KV storage engine. Therefore, the design avoids both single-Raft and memory-capacity limitations.

The system supports multiple Raft state machines through the Multi Raft mechanism. Although only 3 are deployed today, the design allows expanding the number as needed. For example, when metadata grows large or Offset commits become frequent, the number of Metadata RaftMachine and Offset RaftMachine can be expanded from 1 to 2 or more, allowing multiple Leaders to serve in parallel and improve throughput.

Furthermore, the system uses RocksDB for KV data storage, leveraging RocksDB's strengths for read/write performance. Under this design, memory usage is strictly controlled — only part of the hot data is kept in memory, and some data bypasses memory cache entirely, going directly to/from RocksDB. While this sacrifices some performance, it can handle scenarios where metadata scale explodes (e.g., millions or tens of millions of Topics). In MQTT scenarios, with hundreds of millions of connections, the corresponding hundreds of millions of Session entries also won't consume excessive memory.

Thus, a custom built-in metadata coordination service has clear advantages. It can be deeply optimized for message-queue-specific scenarios, achieving extreme optimization in performance, functionality, and stability that generic components cannot match. This is why many components are moving away from ZooKeeper and etcd, and it is also the core motivation for RobustMQ's custom Meta Service.

On the Network Layer

Long-term, Meta Service's performance bottleneck may appear in the network layer. In theory gRPC performs well, but it may face challenges in some extreme scenarios. For example, when an MQTT cluster restarts and hundreds of millions of connections reconnect simultaneously, Brokers may hit Meta Service with high-frequency requests — this is when Meta Service bottlenecks are most likely. To address such scenarios, the system uses two optimization approaches:

- Batch semantics

- Replacing gRPC

Batch semantics means supporting batch operations for certain calls. For example, when creating/updating Session info, Brokers can call Meta Service to create/update multiple Sessions in one call, reducing the frequency of Meta Service calls from Brokers.

In the long-term plan, if gRPC indeed becomes a bottleneck, replacing the protocol — with TCP or QUIC — may be considered. Technically, on stable intranet links, TCP performs better than QUIC; in cross-partition, cross-region, or poor-network scenarios, QUIC performs better than TCP. So there is potential to replace gRPC long-term, but there are no plans for this in the short term.

Summary

In summary, Meta Service is a metadata storage service and KV storage engine built with gRPC + Multi Raft + RocksDB + Batch semantics, highly optimized and adapted for the RobustMQ architecture.